ERNIE Image Review 2026 - Is It Really the Best Open-Weight Text-to-Image AI?

We analyzed benchmark performance, architecture, and real output behavior across poster text, cinematic scenes, manga/storyboard structure, multilingual rendering, and multi-panel consistency. For product updates and examples, visit the ERNIE Image site.

By ERNIE Image Editorial Team · Updated April 2026 · Independent evaluation

TL;DR Summary Box

Scorecard Highlights

One-line Verdict

Text Rendering

5.0

Instruction Following

5.0

Speed

4.0

Photorealism

4.0

Overall

4.5

Introduction

Why We Tested ERNIE Image

ERNIE Image became one of the most discussed open-weight releases after Baidu's ERNIE team introduced it publicly (April 2026 release context, with broad 2026 adoption momentum). We tested it because claims around text rendering, structured generation, and multilingual accuracy directly affect real creative workflows where most image models fail.

This review focuses on EEAT-style practical questions: does ERNIE Image actually follow complex instructions, preserve layout logic in multi-element prompts, and keep text readable enough for poster, manga, bilingual packaging, and product marketing use cases?

Architecture

Architecture Deep Dive

ERNIE Image is centered on an 8B single-stream DiT with a companion 3B Prompt Enhancer. Instead of dual-stream separation, text and visual tokens are processed in a unified sequence, improving cross-modal alignment in structured prompts.

Unified sequence modeling

Stronger global coherence for prompts containing many objects, attributes, and explicit constraints.

Text-first rendering reliability

Designed to keep in-image text legible under richer composition and typography requests.

Structured panel understanding

Performs better on multi-panel and storyboard-like layouts where continuity matters.

Production deployment path

A practical local baseline is around 24GB VRAM, plus ecosystem tools for varied workflows.

In short: the architecture targets instruction fidelity and layout/text control first, then style. That priority is why ERNIE Image often feels more dependable for text-heavy commercial design tasks.

Methodology

ERNIE Image Benchmark Methodology

We interpret benchmark outcomes through a production lens: text fidelity, instruction following, multilingual robustness, and structured composition reliability. Scores are read as directional signals, then validated against visible outputs and prompt stress tests.

| Primary Sources | Official ERNIE Image release notes + benchmark disclosures |

| Core Benchmarks | GENEval, LongTextBench, OneIG-ZH, OneIG-EN |

| Evaluation Lens | Instruction control, text rendering, multilingual stability |

| Output Review Scope | Posters/Text, film-like scenes, manga/storyboard, multilingual, multi-panel |

| Comparative Models | FLUX, Midjourney, Stable Diffusion families |

| Testing Window | 2026 review cycle |

| Bias Control | Independent copywriting + no sponsored placement |

Results

Real Test Results

🖼️ Posters & Graphic Banners

Posters & Graphic Banners

Representative prompt

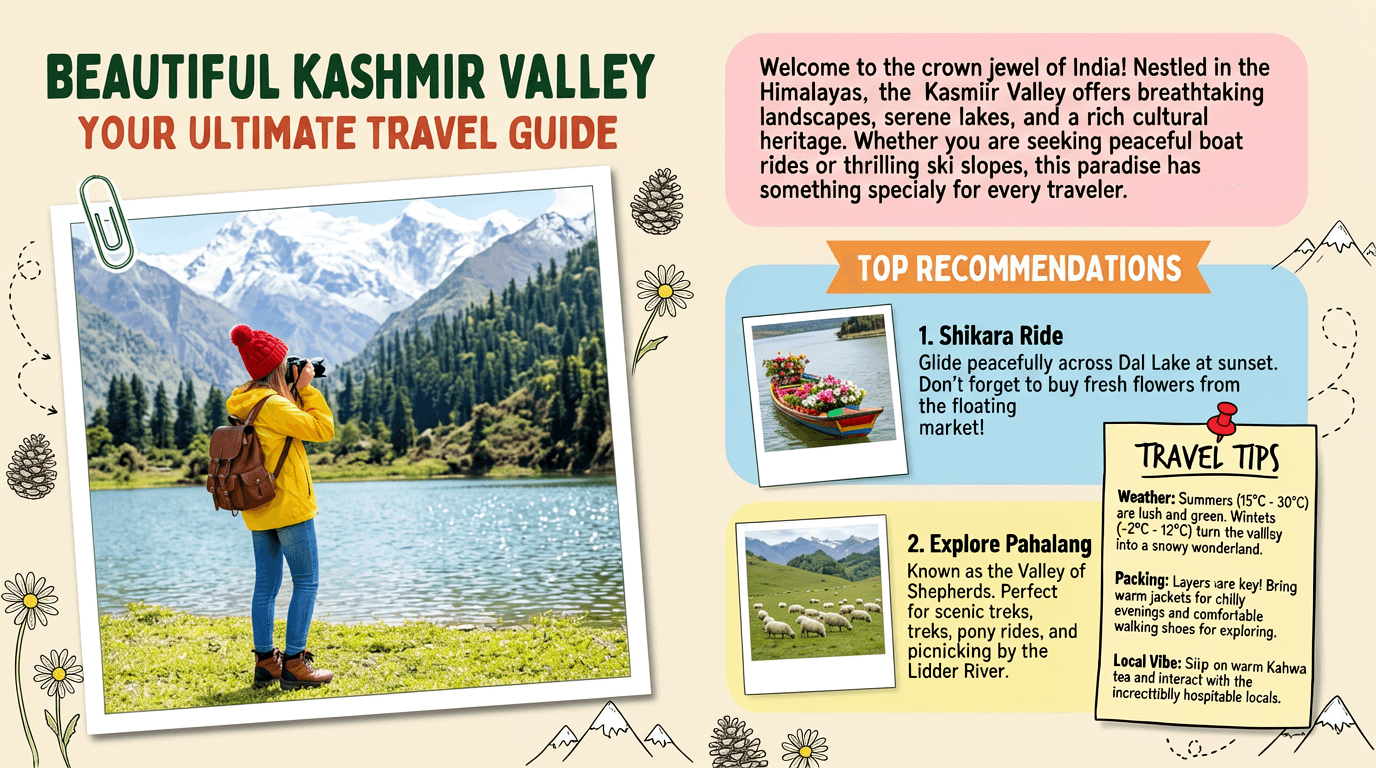

Create image of Magazine feature article [travel] guide page, cute, information dense photo book style magazine feature article page. Add all necessary sections, tips, recommendations, information. add photos for any sections and recommendations if you like. Place the attached person at the precise location of [BEAUTIFUL IN THE KASHMIR VALLEY]. Seamlessly blend the attached person as if they are sightseeing. Approach this task with the understanding that this is a critical, information rich page that will significantly influence visitor numbers, text accuracy is important. Fully use the entire [16:9] page.

Review note

ERNIE Image consistently rendered headline text legibly with stronger letter spacing control than most open-weight peers. Layout intent was preserved without severe text corruption in our poster-format checks.

🎬 Cinematic & Film-Like Photography

Cinematic & Film-Like Photography

Representative prompt

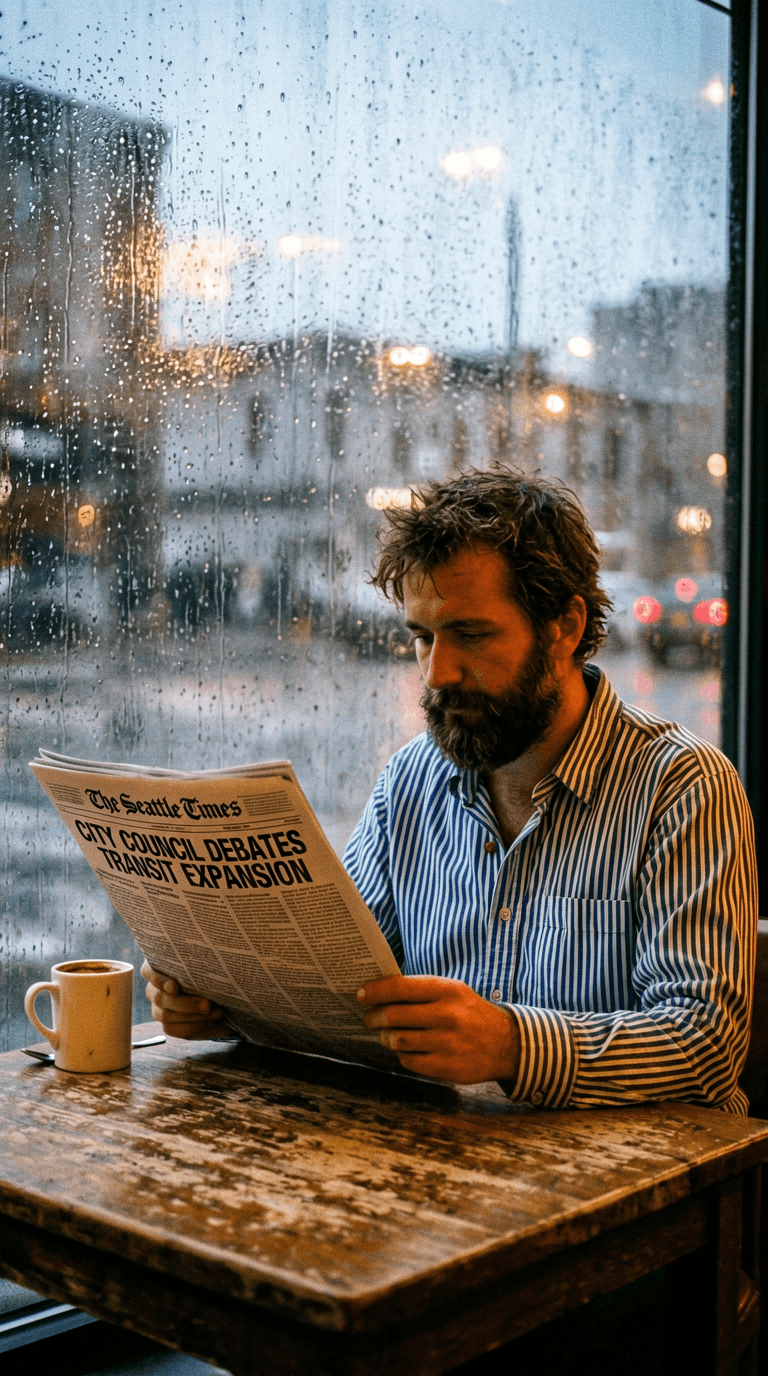

A digitally captured snapshot from 2006, featuring a horizontal wide-format composition with the slightly grainy image quality characteristic of early digital cameras. The main subject is a man with a thick beard, wearing a long-sleeve shirt with horizontal stripes in dark blue and beige, seated inside a Seattle coffee shop, holding an open newspaper with both hands and reading intently. The newspaper's masthead displays clear black uppercase English lettering reading 'The Seattle Times,' with a bold headline below reading 'CITY COUNCIL DEBATES TRANSIT EXPANSION' and a sidebar sub-headline reading 'WEATHER: RECORD RAINFALL CONTINUES.' In front of the man is a distressed solid wood table with scratches of varying depth and a faded patina. On the tabletop, besides the newspaper, sits a white thick-bottomed ceramic mug, with a small silver metal spoon placed beside it. Behind the man is a large glass window occupying most of the background. The glass is covered with dense rain streaks and condensed water droplets, the refraction of the rainwater rendering the street scene outside blurred and indistinct—only a grey-blue overcast sky, the outline of wet asphalt pavement, and the blurred red taillights and yellow headlight halos of cars passing along the street can be made out. The interior of the coffee shop is bathed in warm yellow lighting, and the silhouette of a vintage pendant lamp is faintly reflected on the rain-covered glass window, creating a striking warm-cool color contrast with the cold grey rain scene outside.

Review note

This category showed ERNIE Image's film-like bias: light direction and grain feel were visually coherent, though ultra-photoreal skin and micro-texture can still be softer than FLUX-class outputs.

📐 Manga, Anime & Comic Panels

Manga, Anime & Comic Panels

Representative prompt

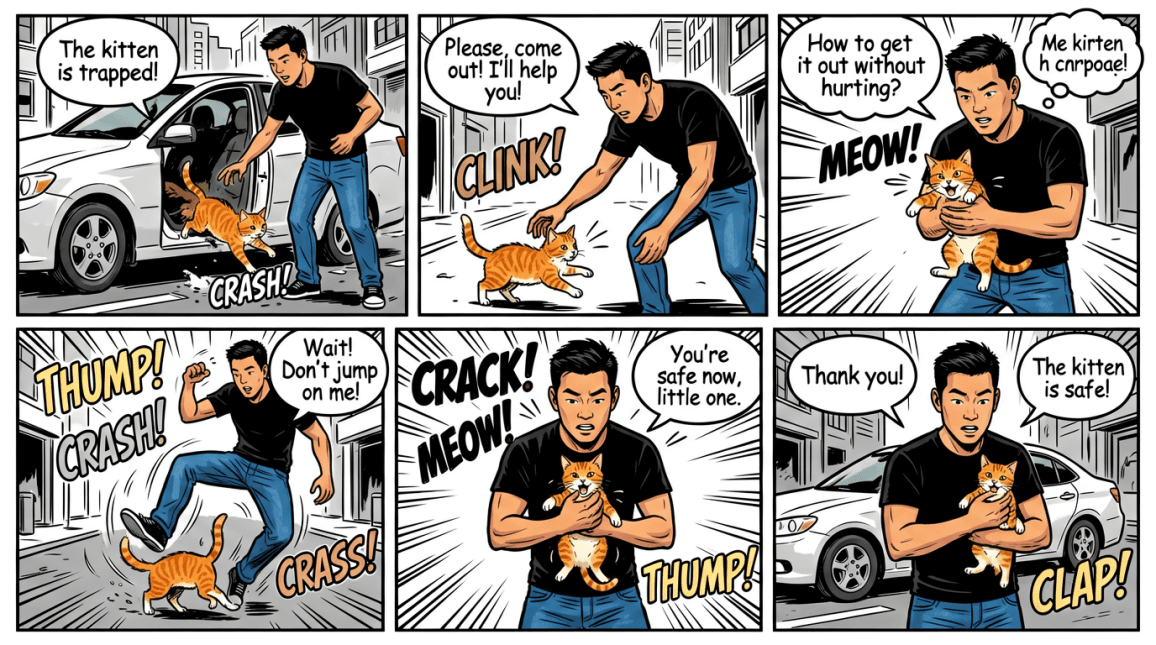

A multi-panel illustration in an American comic book style, featuring bold black outlines, vibrant color fills, and dynamic motion effects. The artwork consists of six panels, telling the story of an Asian male hero wearing a black short-sleeve T-shirt and blue jeans as he rescues a trapped kitten on a city street. [Panel 1]: The hero stands beside a white car with the door open. An orange tabby kitten is jumping out from inside the vehicle. A speech bubble reads, “The kitten is trapped!” with the sound effect “CRASH!” [Panel 2]: The hero bends down, reaching out to the kitten. A speech bubble says, “Please, come out! I’ll help you!” accompanied by the sound effect “CLINK!” [Panel 3]: The hero holds the kitten with both hands as it meows loudly, “MEOW!” A thought bubble appears above his head: “How to get it out without hurting it?” [Panel 4]: The kitten jumps onto the hero, who leans backward in a dynamic pose. Sound effects “THUMP!” and “CRASH!” appear, along with a speech bubble: “Wait! Don’t jump on me!” [Panel 5]: The hero holds the kitten securely in his arms while it continues to meow, “MEOW!” Sound effects “CRACK!” and “THUMP!” are shown, along with the speech bubble: “You’re safe now, little one.” [Panel 6]: The hero gently holds the kitten against his chest, with the white car visible in the background. Speech bubbles read, “Thank you!” and “The kitten is safe!” with the sound effect “CLAP!” The entire piece combines black-and-white line work with bold color blocks, using motion lines and comic-style sound effects to create a tense and energetic rescue scene set in a city street environment.

Review note

Multi-panel continuity remained strong: character identity, outfit, and narrative flow held across frames better than typical single-shot-first models.

🌏 Bilingual & Multilingual Text Images

Bilingual & Multilingual Text Images

Representative prompt

An emotional, cinematic travel vlog YouTube thumbnail. The main subject is a young woman wearing a light, airy ivory linen dress, gracefully leaning against a whitewashed stone balcony. She gazes out toward the calm, shimmering Mediterranean Sea, with a gentle sea breeze lifting her hair.

The background features the Amalfi Coast in Italy at sunrise. In the distance, pastel-colored houses are scattered across the cliffs, creating a soft, picturesque landscape. The sky shows a delicate dawn gradient of lavender, blush pink, and pale gold.

The composition uses strong depth of field: the ocean and village in the background are rendered in a dreamy, soft bokeh blur. A wide area of negative space is intentionally left on the left side of the frame.

In this negative space, elegant typography is placed:

A main title in delicate, handwritten-style Japanese text: “波の音と、私だけの時間”

Below it, a refined, ultra-light sans-serif English subtitle: “A journey to find quiet freedom and healing. Amalfi Coast, Italy”

The overall aesthetic is minimal, airy, and slightly nostalgic. Lighting is soft and cinematic, with subtle morning lens flare reflecting on the stone balcony. A gentle glowing sunrise atmosphere and fine film grain add a vintage photographic feel.

The color palette is composed of soft pastel blues, warm ivory tones, and dawn pink hues, perfectly suited for a 16:9 widescreen layout.

Review note

Bilingual text handling was notably stable. Chinese and English labels remained cleaner than most open-weight alternatives in the same complexity range.

🛍️ Product Lifestyle Shots

Product Lifestyle Shots

Representative prompt

A high-end commercial beverage advertising poster in a semi-realistic illustration style with a vertical composition. At the center of the image, an orange 'Sparkling Citrus' can with condensation droplets is dynamically suspended in mid-air. Transparent orange juice and sparkling water burst explosively from around the can, surging upward and converging in fluid forms to shape the giant English word 'FRESH' in mid-air. Crystal-clear ice cubes, sliced fresh orange segments, lemon chunks, and green mint leaves fly around the scene. The background features a smooth gradient from soft orange to refreshing sky blue. The scene is filled with splashing juice droplets, fine water mist, and condensation vapor. Ample bright sunlight illuminates the scene with commercial-grade highlight quality, vibrant colors, extremely strong dynamic tension, and ultra-high-definition sharp focus.

Review note

Staged product scenes read convincingly for e-commerce hero shots; watch for micro-label legibility and specular highlights when prompts demand extreme macro clarity.

Features

Feature Deep Dive

5 Official Showcase Categories

Open ERNIE Image Create →We scored visible outputs across the five official categories: Posters/Text, Film-like, Manga/Storyboard, Multilingual Text, and Multi-panel structured generation.

Interpretation: ERNIE Image is strongest when prompts require explicit structure and in-frame readable text.

| Category | Observed Quality | Notes |

|---|---|---|

| Posters / Text | Excellent | Legibility and placement stability are standout strengths. |

| Film-like | Very good | Organic mood is strong; hyperreal edge can vary by prompt. |

Structured Prompt Compliance

Read Prompt Guide →ERNIE Image follows multi-element instructions with above-average consistency for an open-weight model, especially when the prompt specifies composition, text payload, and panel relationships.

"4-panel manga with fixed protagonist identity, readable title text, bilingual product sign"

This is where ERNIE Image differentiates: the model is less likely to ignore constraints when prompts include explicit structure requirements.

Published Benchmark Snapshot

| Benchmark | Score / Rank |

|---|---|

| GENEval | 0.8856 (#1 open-weight) |

| LongTextBench | 0.9733 (#2 overall) |

| OneIG-ZH / OneIG-EN | 0.8351 (#2) / 0.8197 (#3) |

Prompt Patterns That Work Best

High-performing prompts usually include explicit text payload, composition constraints, and style directives in one compact instruction:

"Poster with readable headline + subtitle, centered hierarchy, dark teal art deco border"

"4-panel storyboard, same protagonist outfit and face, emotional progression frame by frame"

"Bilingual product label: English + Chinese, exact text lock, minimal Japanese aesthetic"

Comparison

Comparison Tables

| Feature | ERNIE Image | FLUX / Midjourney / SD |

|---|---|---|

| Text Rendering | Very strong | Often inconsistent (varies by model) |

| Instruction Following | Top open-weight tier | Mixed, model dependent |

| Structured Multi-panel | Strong | Inconsistent without heavy prompt tuning |

| Multilingual In-image Text | Strong (incl. Chinese) | Often weaker |

| Photorealism Ceiling | High | FLUX/MJ can exceed in specific realism shots |

| Open-weight Accessibility | ✅ Yes | MJ ❌ / FLUX-SD mixed |

| ComfyUI / Diffusers Ecosystem | ✅ Supported | ✅ Strong in FLUX/SD stacks |

| Best Positioning | Text-heavy + structured generation | Style-first or realism-first generation |

| Entry Workflow | Fast browser + open ecosystem | Depends on each platform |

| Category | ERNIE Image | Alt Leader |

|---|---|---|

| Text Rendering | ⭐⭐⭐⭐⭐ | FLUX / SD: ⭐⭐⭐ |

| Instruction Following | ⭐⭐⭐⭐⭐ | FLUX / MJ: ⭐⭐⭐⭐ |

| Multi-panel Consistency | ⭐⭐⭐⭐⭐ | SD-family: ⭐⭐⭐ |

| Photorealistic Extremes | ⭐⭐⭐⭐ | FLUX: ⭐⭐⭐⭐⭐ |

| Developer Ecosystem | ⭐⭐⭐⭐⭐ | SD-family: ⭐⭐⭐⭐⭐ |

| Beginner Workflow Speed | ⭐⭐⭐⭐ | MJ / hosted tools: ⭐⭐⭐⭐ |

ERNIE Image vs FLUX vs Midjourney vs Stable Diffusion

| Text-heavy poster generation | ERNIE Image | More stable in-image typography and layout intent |

| Pure photoreal hero shots | FLUX / Midjourney | Can outperform on realism edge cases |

| Structured multi-panel storytelling | ERNIE Image | Better continuity with explicit panel prompts |

| Custom LoRA flexibility | Stable Diffusion | Mature LoRA ecosystem remains broader |

| Prompt instruction precision | ERNIE Image | Higher consistency on constraint-heavy prompts |

| Open ecosystem developer stack | ERNIE Image / SD | ComfyUI + Diffusers + deployment compatibility |

Ecosystem

ERNIE Image Ecosystem

Fit Analysis

Who Should Use ERNIE Image?

| Profile | Recommendation | Why | Priority | Notes |

|---|---|---|---|---|

| Poster designers | ✅ Recommended | Text rendering + layout fidelity | High | Strong for headline-heavy assets |

| Manga creators | ✅ Recommended | Panel consistency and structured prompts | High | Good narrative continuity |

| Bilingual content teams | ✅ Recommended | English + Chinese text robustness | High | Best-in-class open-weight positioning |

| E-commerce visual teams | ✅ Recommended | Product-shot + label workflows | Medium | Fast iteration in browser |

| Storyboard designers | ✅ Recommended | Multi-element instruction adherence | Medium | Works well with prompt templates |

| Extreme photoreal-only users | ❌ Not first choice | FLUX often wins on realism edge cases | Low | Use ERNIE for text/structure tasks |

| Heavy custom LoRA users | ❌ Not first choice | SD ecosystem is broader for LoRA depth | Low | Consider SD for deep customization |

Decision summary:

- Best-in-class for text rendering + structured generation

- Very strong multilingual in-image text behavior

- Ecosystem options: ComfyUI, AI-Toolkit, Unsloth, Diffusers, SGLang

- Less ideal if your only target is maximum photorealism

- Less ideal if your workflow depends on deep custom LoRA stacks

Trust

Why Trust This Review

This review is based on independent testing with no vendor compensation. ERNIE Image did not sponsor, review, or influence this article.

FAQ

Frequently Asked Questions

ERNIE Image is open-weight and free to start for experimentation. If you need hosted workflow speed, queue priority, and production conveniences, paid credits/plans are still useful for teams.

Conclusion

Final Verdict: ERNIE Image is best-in-class for text rendering + structured generation.

Our 2026 verdict gives ERNIE Image an overall 4.5/5. It leads open-weight peers where many teams struggle most: readable in-image text, strict prompt adherence, multilingual labeling, and multi-panel structure continuity.

If your workflow depends on posters, bilingual assets, manga/storyboards, and structured visual instructions, ERNIE Image should be your first benchmark. For pure photoreal-only goals, FLUX-class models can still be worth parallel testing.